Artificial intelligence has a new challenge: whether and how to alert people who may not know they're talking to a robot.

Google showed off a computer assistant this week that makes convincingly human-sounding phone calls, at least in its prerecorded demonstration. But the real people in those calls didn't seem to be aware they were talking to a machine. That could present thorny issues for the future use of AI.

Among them: Is it fair - or even legal - to trick people into talking to an AI system that effectively records all of its conversations? And while Google's demonstration highlighted the benign uses of conversational robots, what happens when spammers and scammers get hold of them?

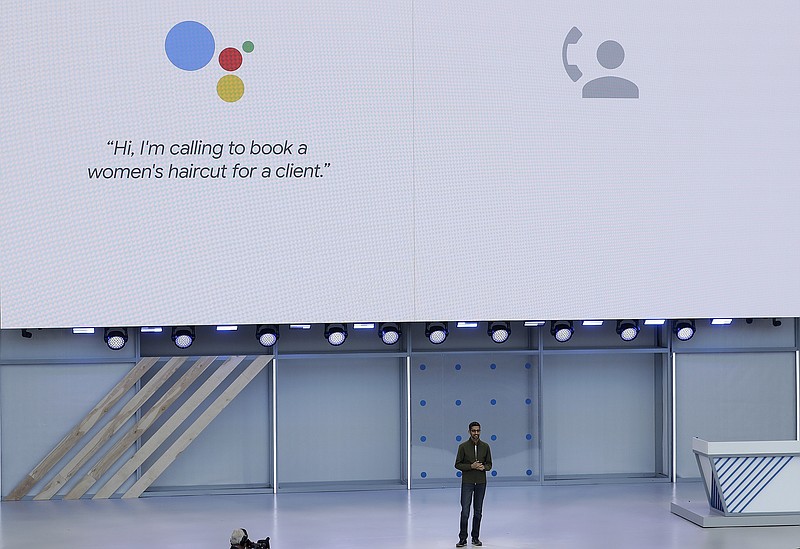

Google CEO Sundar Pichai elicited cheers on Tuesday as he demonstrated the new technology, called Duplex, during the company's annual conference for software developers. The assistant added pauses, "ums" and "mmm-hmms" to its speech in order to sound more human as it spoke with real employees at a hair salon and a restaurant.

"That's very impressive, but it can clearly lead to more sinister uses of this type of technology," said Matthew Fenech, who researches the policy implications of AI for the London-based organization Future Advocacy.

Fenech said it's not hard to imagine nefarious uses of similar chatbots, such as spamming businesses, scamming seniors or making malicious calls using the voices of political or personal enemies.

Pichai and other Google executives tried to emphasize that the technology is still experimental, and will be rolled out cautiously. It's not yet available on consumer devices.

"It's important to us that users and businesses have a good experience with this service, and transparency is a key part of that," Google engineers Yaniv Leviathan and Yossi Matias, who helped design the new technology, wrote in a Tuesday blog post.

It's unclear how the company will navigate existing telecommunications laws, which can vary by state or country.

Anti-wiretapping laws in California and several other states already make it illegal to record phone calls without the consent of both the caller and the person being called. The Federal Communications Commission has also been grappling with rules for robocalls, the unsolicited and automatically-dialed calls made by telemarketers.

Such calls are typically prerecorded monologues, but more businesses and organizations are employing machine-learning techniques to respond to a person's questions with a natural-sounding conversation, in hopes they'll be less likely to hang up.